Respiratory motion correction for abdominal PET-MRI studies

PET and MRI are two powerful imaging technologies that are characterized by high sensitivity and the ability to provide superior anatomic detail, respectively, which might make them ideal for evaluating the upper abdomen. However, PET requires long acquisition times, including the acquisition of data from moving organs, which may result in image blurring. On the other hand, MRI, especially standard DCE-MRI, can scan the chosen field of view in a shorter time, but requires the patient’s cooperation with the respiratory instructions and the ability to suspend respiration for the acquisition time of breath-hold sequences, usually in the range 14–20 s. Moreover, even in patients with an adequate respiratory breath-hold ability, the quality and the diagnostic information of DCE-MRI are also dependent on the hemodynamics of the patient and the timing of contrast agent injection and data acquisition. These variables explain the occurrence of respiratory artifacts and erroneous phases of contrast enhancement imaging in DCE-MRI.

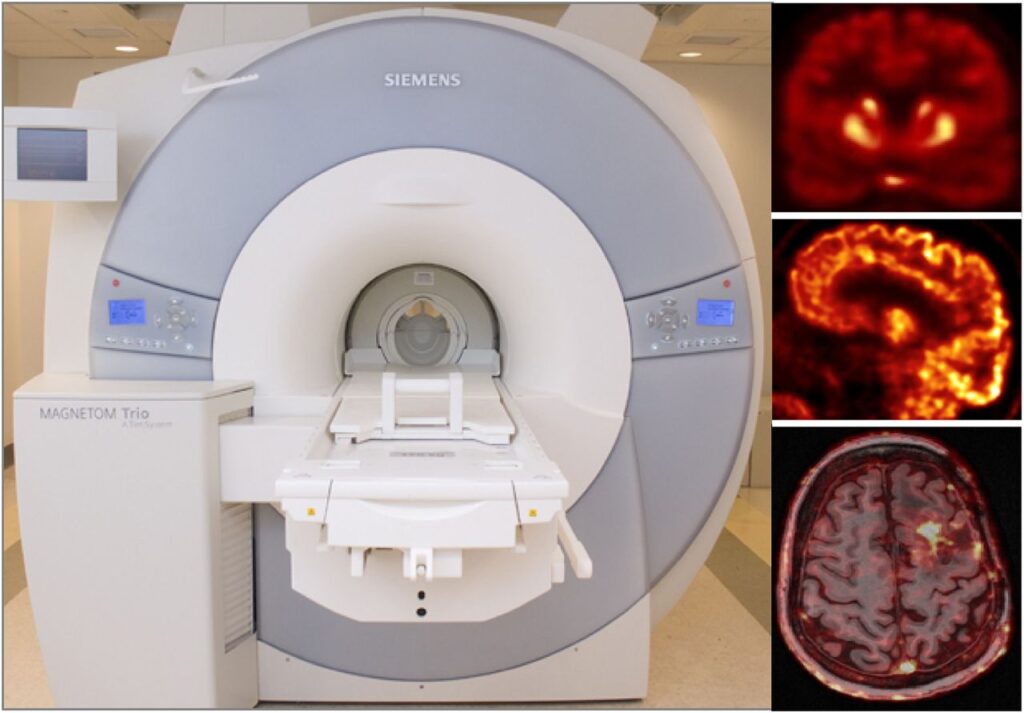

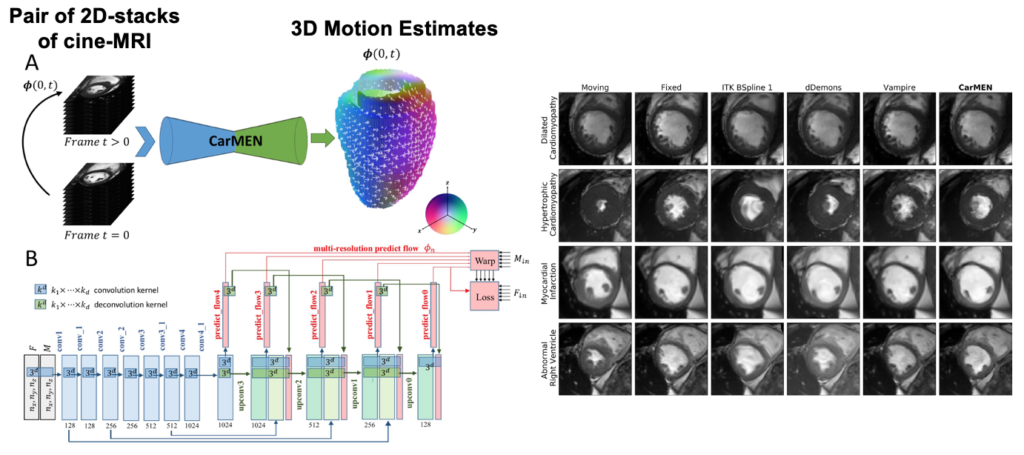

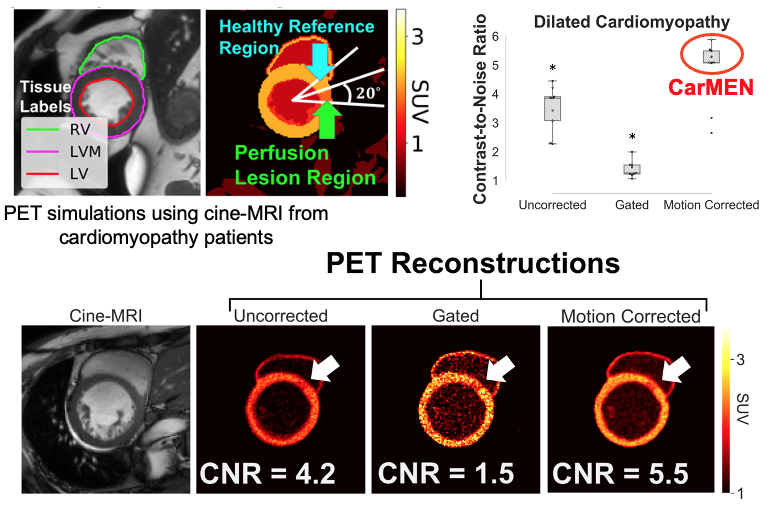

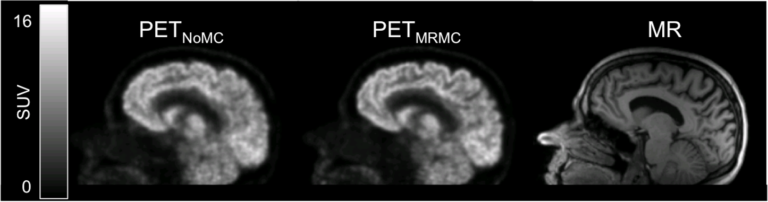

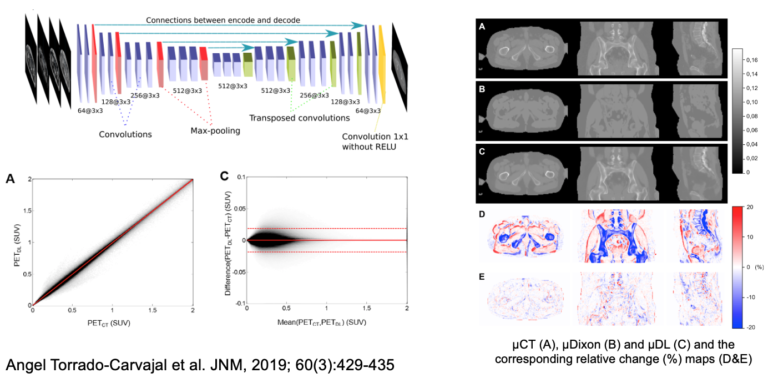

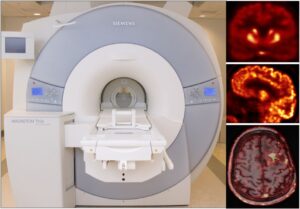

We presented and evaluated in vivo a comprehensive approach for self-gated MR motion modeling applied to concurrent respiratory motion compensation of PET and DCE-MRI data acquired simultaneously in an integrated PET/MR system.

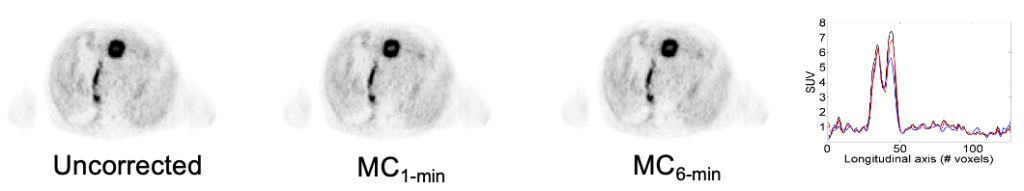

Fully registered, motion-corrected PET images and diagnostic DCE-MR images were obtained with negligible acquisition time prolongation compared with standard breath-hold techniques. Both the MR and the PET image quality and tracer uptake quantification were improved when compared with conventional methods (Fuin 2018).

This approach was subsequently evaluated clinically in collaboration with Dr. Onofrio Catalano to demonstrate that motion-corrected PET/MRI produced better PET images and reduced the spatial mismatch between the two modalities (Catalano 2018).